The Question Hiding Underneath ChatGPT’s Scale

OpenAI had the kind of week that makes everyone reach for the obvious story.

OpenAI had one of those weeks where every headline seemed to point in the same direction.

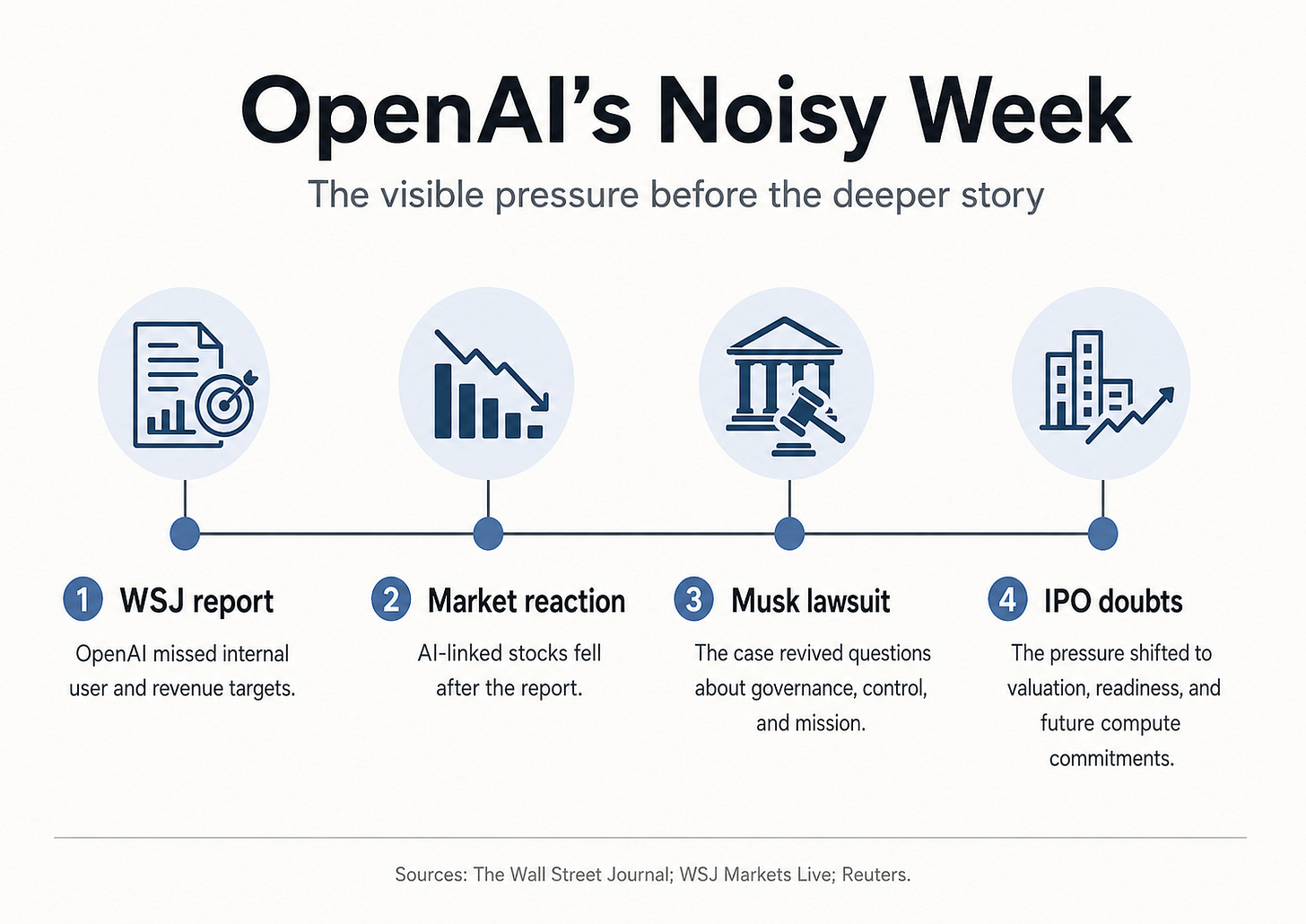

Last week’s All-In Podcast1 opened with the pressure around OpenAI: missed user targets, missed revenue targets, IPO doubts, and a courtroom fight with Elon Musk. David Sacks framed it as a bad week for OpenAI. It exposes the deeper question: what kind of company OpenAI has become.

It is also easy to read badly.

The loud story is the lawsuit. The more important story sits in the operating model. OpenAI is trying to scale one of the most capital-intensive technology businesses ever built while the physical world is struggling to keep up: power, data centres, chips, grid connections, transformers, and the cost of turning model intelligence into paid work.

Can OpenAI scale intelligence fast enough now that the constraint has moved from software into infrastructure?

And for users, founders, and investors, the practical question is just as direct: is ChatGPT still the AI platform to bet on?

1. The noisy week

The visible facts are simple.

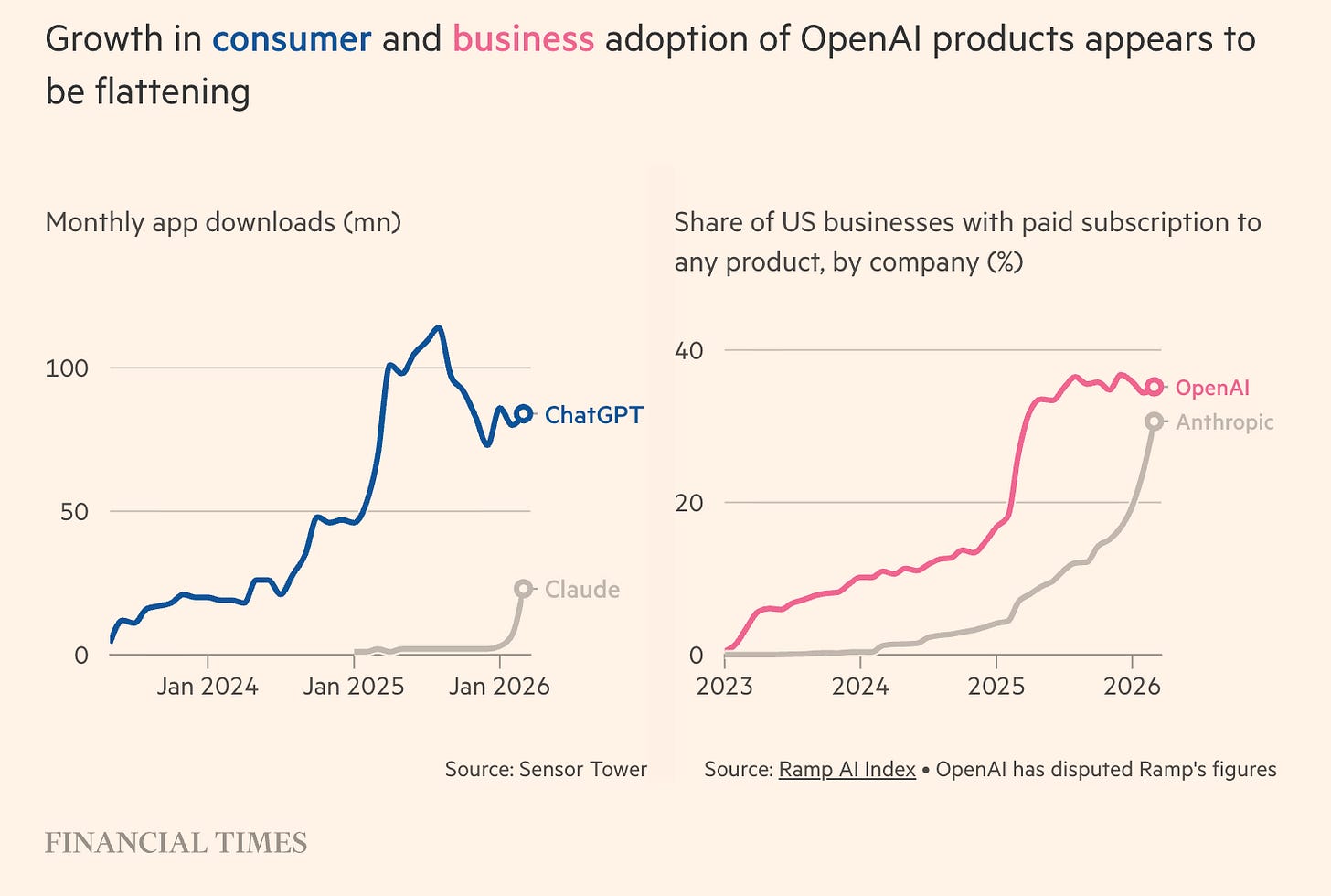

The Wall Street Journal reported that OpenAI missed internal targets for user growth and revenue. The company had expected to reach 1 billion weekly ChatGPT users before the end of 2025, but had not done so several months into 2026. The same report said OpenAI had also missed its 2025 revenue target for ChatGPT, while carrying very large future compute commitments.2

Reuters reported that OpenAI CFO Sarah Friar had raised concerns internally about future computing contracts if revenue growth does not keep pace. OpenAI pushed back publicly. Sam Altman and Sarah Friar said they were aligned on buying as much compute as possible.3

Then came the trial.

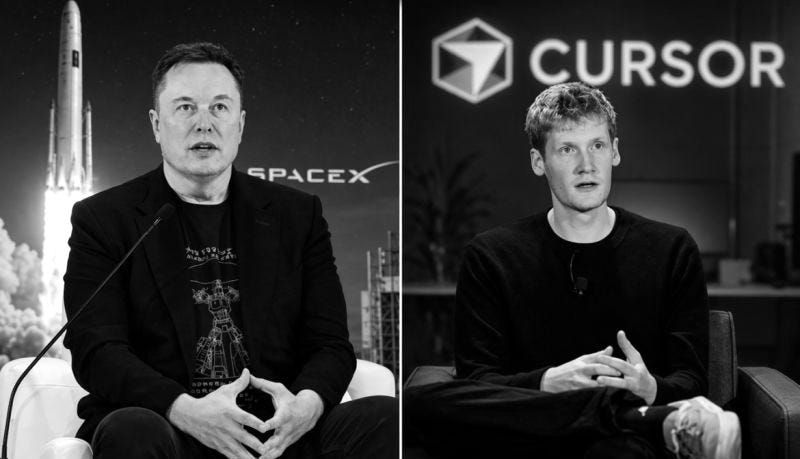

Reuters reported that Elon Musk’s lawsuit against OpenAI is now putting the company’s nonprofit-to-profit transition, founder incentives, Microsoft relationship, and internal governance under the microscope. Greg Brockman, OpenAI’s president and co-founder, disclosed a stake estimated near $30 billion and financial ties to Sam Altman. Musk argues that OpenAI betrayed its original mission. OpenAI argues that Musk is trying to regain influence and slow a competitor.4

This gives the week a clean media shape.

OpenAI looks stretched. The CFO worries about compute. The CEO wants to move faster. The old nonprofit story collides with one of the largest private-company valuations in the world. Elon Musk, now building xAI, is back in the room through the court door.

The Wall Street Journal’s Tim Higgins framed the same week as a test of Sam Altman’s Silicon Valley lore: “the founder who made OpenAI look almost inevitable is now facing missed-target scrutiny, IPO pressure, Anthropic momentum, and Elon Musk in court.”5

That is enough for a good media narrative. It is not enough to understand OpenAI.

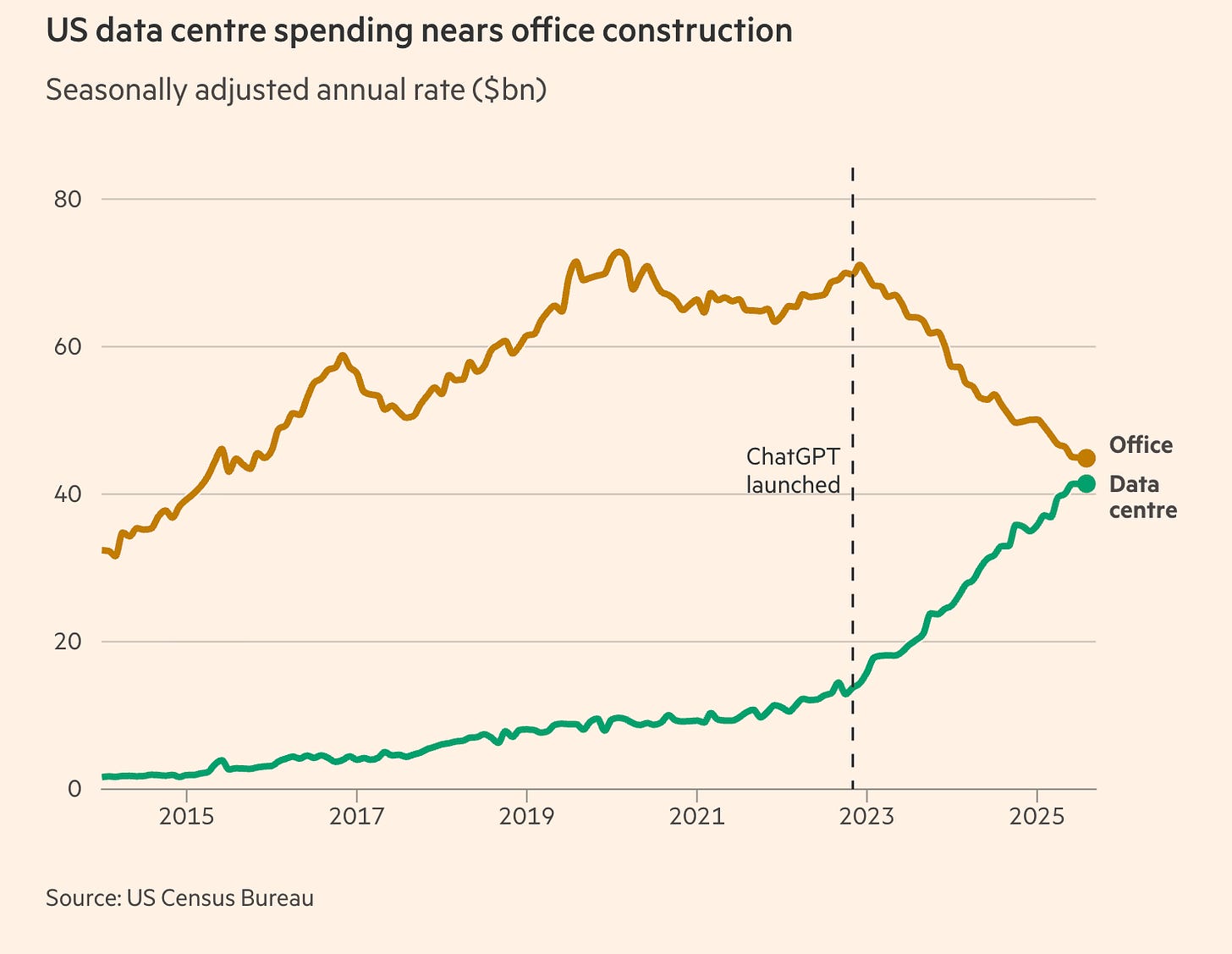

The missed targets matter. Governance matters. The lawsuit matters, at least for control and perception. But none of these explains the deeper tension. OpenAI is being judged like a software company while its economics increasingly look like infrastructure.

That mismatch is where the story gets interesting.

2. OpenAI is still a product force

Yes. Below is Chapter 2, same structure, sources added only where needed.

The simple bear case says: OpenAI missed targets, therefore demand is weaker than expected.

That is too clean.

OpenAI still has enormous consumer reach. ChatGPT remains one of the most important software products ever launched. Even if the company missed its internal billion-user target, the scale is extraordinary. The problem is that internal targets, public perception, product strength, and infrastructure capacity are now all tangled together.6

OpenAI is also still shipping.

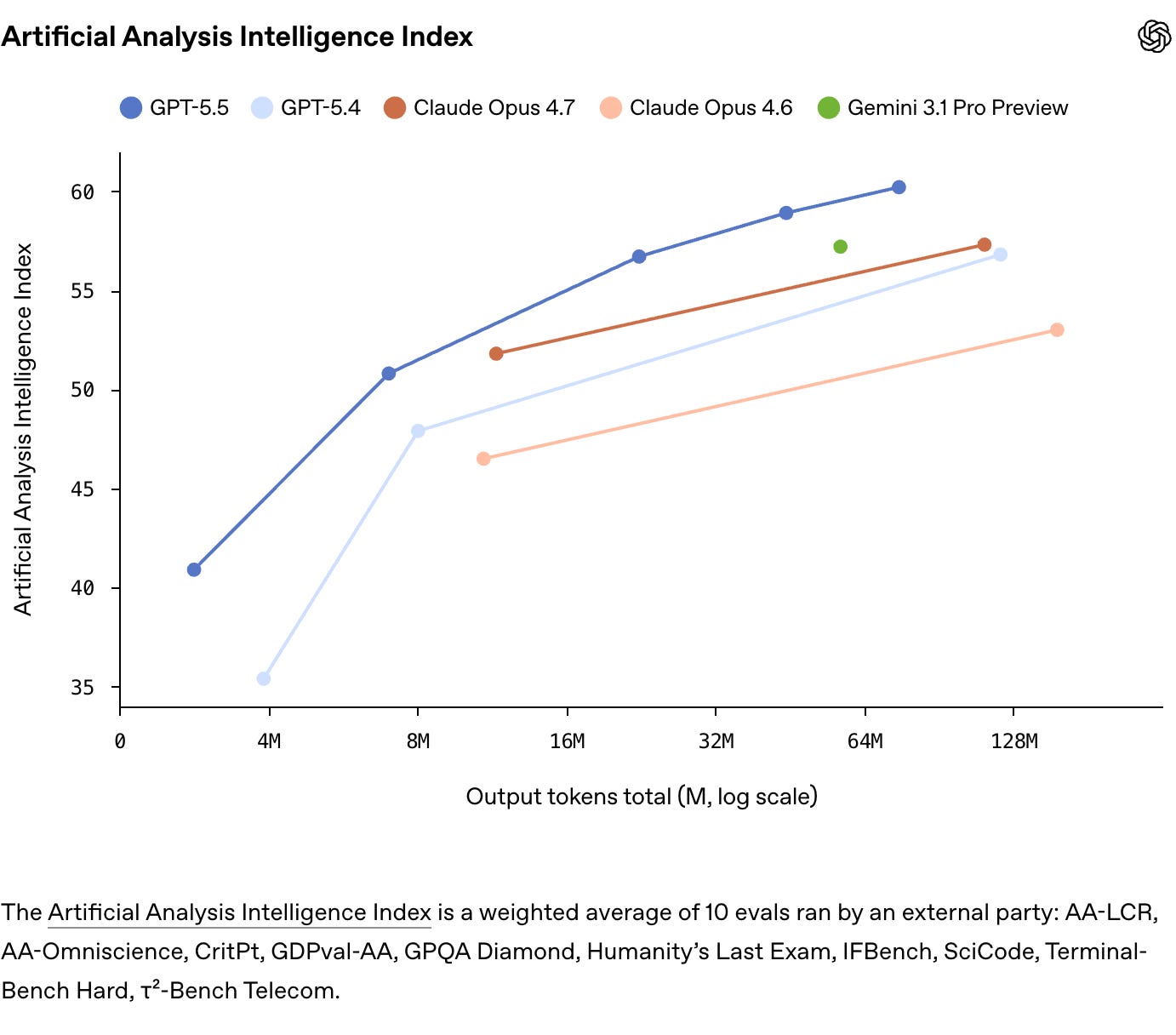

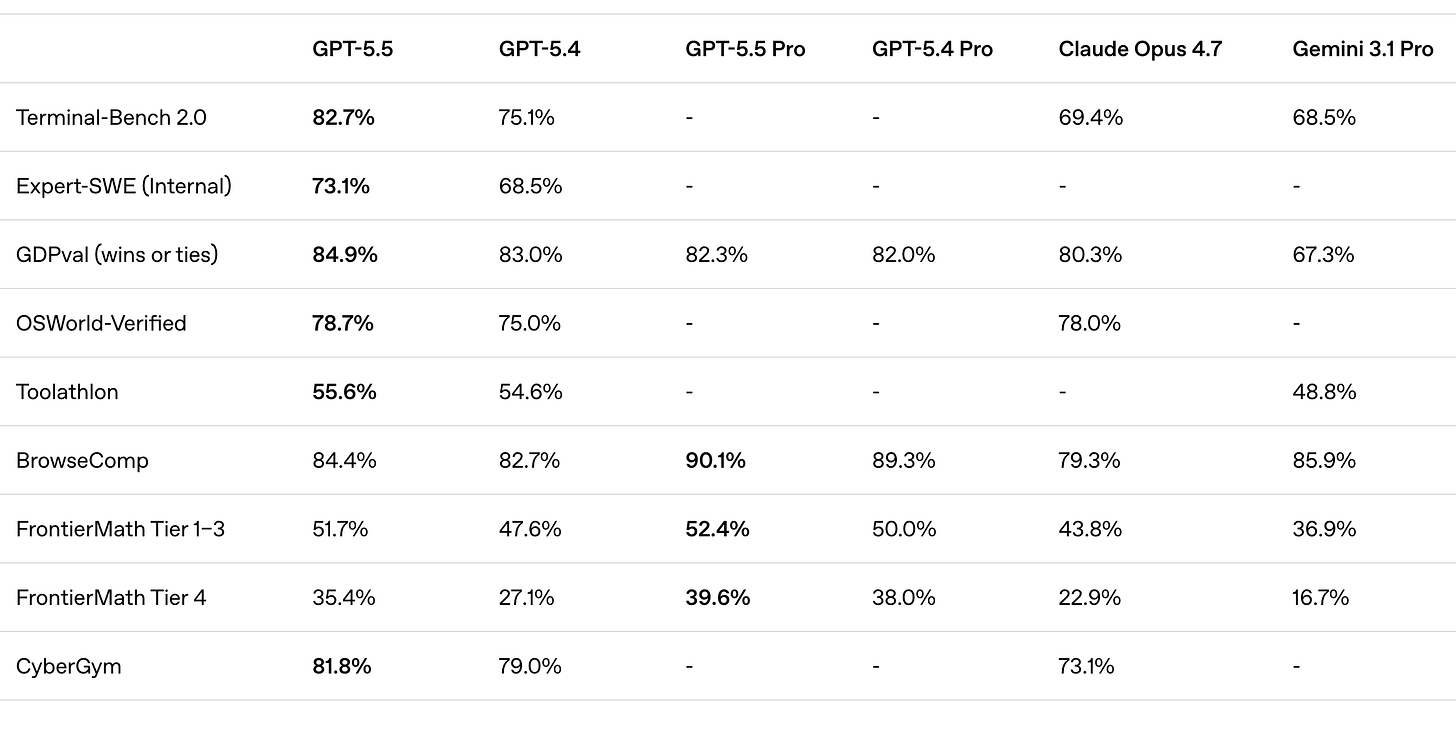

What GPT-5.5 changes

OpenAI released GPT-5.5 on April 23. Compared with Anthropic’s more dramatic Claude launches, it arrived almost quietly: less theatre, more product update. It describes GPT-5.5 as a model for complex work: coding, research, data analysis, documents, spreadsheets, and tool use. The main claim is less “better chatbot” and more “can carry more of the task itself.”7

The system card says GPT-5.5 understands tasks earlier, asks for less guidance, uses tools more effectively, checks its work, and keeps going until the job is done.8

OpenAI also released GPT-5.5 Instant as the new default ChatGPT model, claiming fewer hallucinations and better factuality in high-stakes areas like law, medicine, and finance.9

The company has been pushing ChatGPT deeper into work: coding, research, documents, spreadsheets, long context, tool use, and agent-style tasks. Codex and the broader developer workflow matter here. Coding has become one of the most commercially important AI use cases because it turns model capability into measurable productivity, budgeted usage, and high-frequency demand.10

That matters for the OpenAI vs Anthropic story.

OpenAI’s edge is that it has been more aggressive in securing dedicated compute capacity ahead of demand. Anthropic is growing fast, but much of its capacity comes through Amazon, Google, and other partners. That may be prudent. It also means Anthropic can be more exposed when compute gets rationed.

You can already see the tension around Claude. Anthropic says recent Claude Code quality issues came from prompt changes, not reduced reasoning, and published a postmortem after user complaints. But the wider market read was clear: when AI products become serious work tools, users notice every throttle, slowdown, quota, and quality wobble immediately. The White House also reportedly raised compute-capacity concerns around Anthropic’s plan to expand Mythos access, alongside national-security concerns. That does not prove Anthropic faked a security argument because of compute. It does show that safety, access, and capacity are now tangled together.1112

OpenAI does not simply “own data centres” like Google/Microsoft. Stargate is partner-centric: Oracle, SoftBank, Microsoft, utilities, builders, finance partners. OpenAI says it is securing/building compute capacity with partners, not doing everything alone.13

Anthropic also has big compute deals, but mostly through hyperscalers: Google/Broadcom TPUs, Amazon, CoreWeave. That means Anthropic is more dependent on partner allocation. Anthropic itself says it signed a Google/Broadcom deal for multiple gigawatts of TPU capacity starting in 2027.14

Over the past year, Anthropic has taken a lot of the enterprise narrative. Claude became especially strong in coding and knowledge work. In our recent Anthropic vs. OpenAI piece, the key point was that Anthropic still trails OpenAI in consumer reach, but the revenue and enterprise gap narrowed much faster than most people expected.15

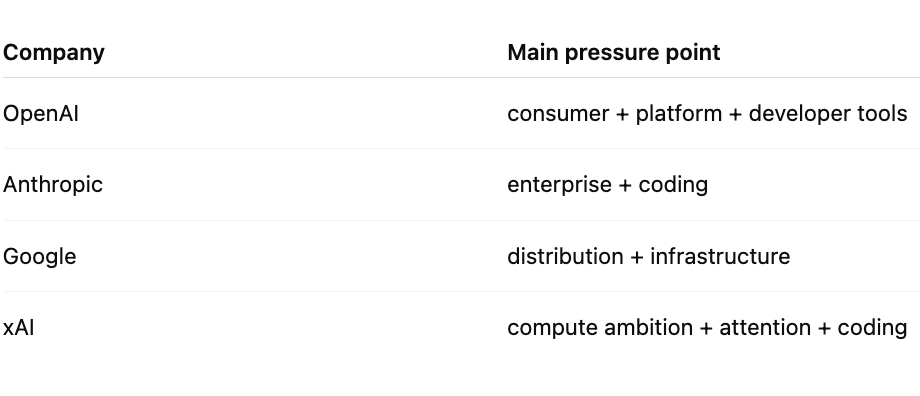

OpenAI is now fighting on several fronts at once.

Anthropic is strong in enterprise and developer workflows. Google has Gemini, Search, Android, Workspace, Cloud, and its own infrastructure. xAI has Elon Musk, distribution through X, an aggressive compute build-out, and now a reported SpaceX agreement around Cursor. DeepSeek and other open-weight models put pressure on pricing and on the assumption that frontier capability must always remain closed and expensive.16

This is not a simple two-company race anymore.

OpenAI still has the broadest public brand in AI. Anthropic has a focused enterprise machine. Google has unmatched distribution and infrastructure. xAI has a founder who can turn attention, capital, and engineering speed into a weapon. DeepSeek (see our essay last week) has shown that efficiency and open weights can move the market even when the US labs still lead in many areas.

That makes OpenAI’s position more complicated, but not weak.

The company is still one of the central players in the market. It still has distribution, product velocity, brand, developer attention, enterprise demand, and one of the strongest research teams in the world.

The issue is different. OpenAI’s ambition requires the physical world to move at software speed.

The physical world is not very good at that. Annoying habit.

3. The constraint has moved into the physical world

AI used to be discussed mainly as a model race.

Who has the best benchmark?

Who has the longest context window?

Who is ahead in coding?

Who is cheaper per token?

Who has the best agent demo?

Those questions still matter. But they no longer carry the whole story.

In our DeepSeek V4 piece, we used a simple line: the model gets the headline, but the stack carries the weight.

That stack now reaches deep into the physical world.

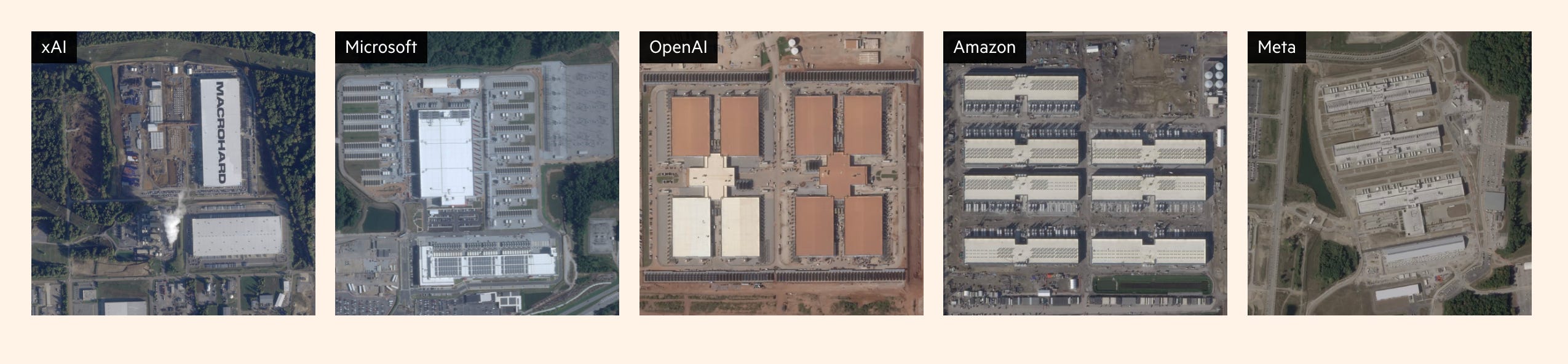

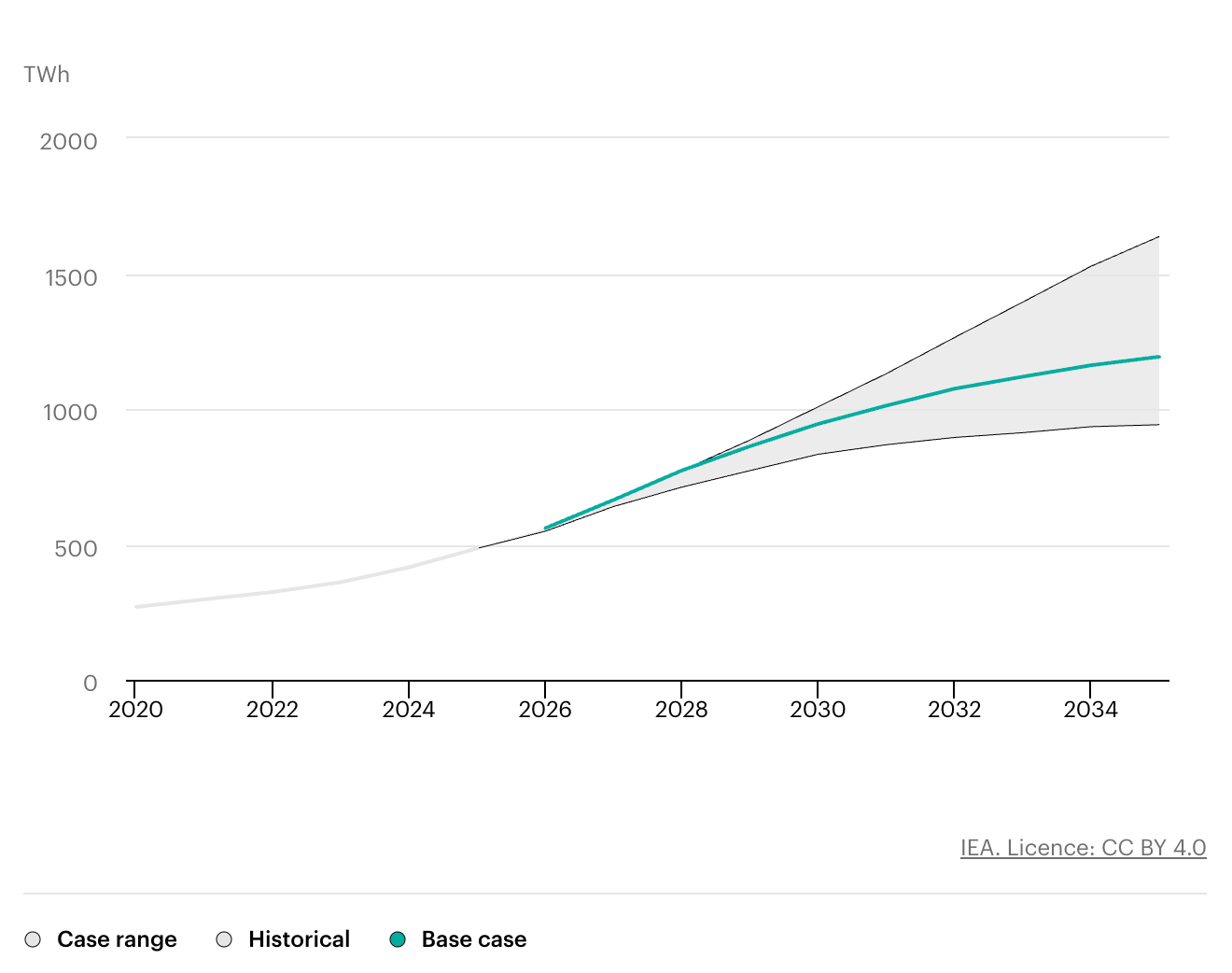

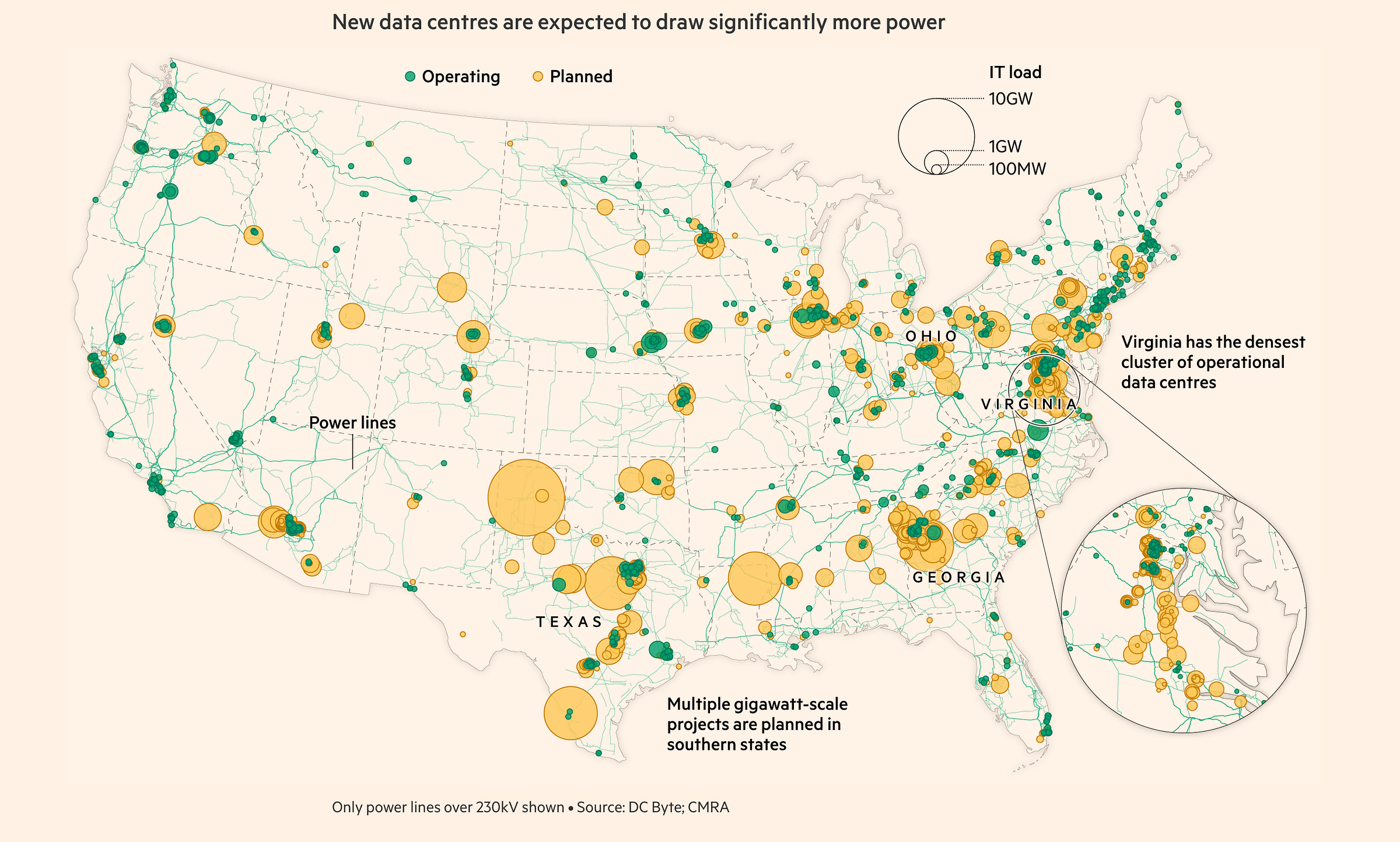

The International Energy Agency says electricity consumption from data centres is rising fast, and AI-focused data centres are growing faster than the overall data-centre market. In its 2026 energy and AI work, the IEA projected that data-centre electricity consumption could roughly double from 2025 to 2030, while AI-focused data-centre electricity consumption could triple over the same period.17

Electricity consumption by data centres, 2020-2035

The Financial Times put the US power crunch in sharper terms. According to its analysis, new data centres may require about 44GW of additional capacity by 2028, while only about 25GW may come online in time. That leaves a gap of roughly 19GW.18

Bloomberg has reported on another bottleneck: electrical equipment. Transformers, switchgear, and other grid components are not glamorous, but without them, data-centre plans do not become operating capacity.19

This is the part of AI that does not fit neatly into the app-store imagination.

You can launch a model update overnight. You cannot launch a grid connection overnight. You can raise capital quickly in a hot market. You cannot manufacture transformers, secure permits, build substations, contract power, and bring a massive data centre online at the same pace.

That matters for OpenAI because compute is not a side cost. It is the production input.

Every better model, longer context window, coding agent, research task, voice interaction, image generation, and enterprise workflow consumes compute. The more useful the product becomes, the more demand it creates. If the system works, usage expands. If usage expands, the need for compute expands with it.

This creates a strange-looking business pattern.

A normal software company tries to scale revenue while marginal costs stay low. OpenAI is scaling a service where the product becomes more valuable as it becomes more compute-hungry. Efficiency improvements help, and they matter a lot. But the aggregate demand curve is still brutal.

This is why the missed-target story can be misleading.

A SaaS company missing user and revenue targets often points to weaker demand, weaker retention, poor pricing, or competition. For OpenAI, those factors may all matter, but the supply side is unusually important. Capacity, pricing, latency, reliability, model routing, inference cost, and access to compute all shape what the company can sell and how profitably it can sell it.

The better OpenAI gets, the more infrastructure it needs.

That is the core tension.

4. What OpenAI has to prove

The company now has to prove that it can turn capital into compute, compute into reliable products, and reliable products into paid work at a pace that justifies its valuation and spending commitments.

The Wall Street Journal reported that OpenAI missed internal user and revenue targets while carrying concerns around very large future compute commitments.20

That is a different kind of company from the one people imagined when ChatGPT first broke into the mainstream.

Back then, the story felt like software: a new interface, a magical chatbot, a consumer product spreading through the world at absurd speed.

Today, OpenAI looks more like a hybrid between a software platform, a research lab, and a massive infrastructure buyer with enormous distribution.

That makes the business harder to read.

If OpenAI underbuilds compute, it risks product quality, latency, reliability, and enterprise momentum. If it overbuilds compute, it risks carrying massive commitments before revenue catches up. If it moves too slowly, Anthropic, Google, xAI, and open-weight models take share. If it moves too aggressively, the CFO problem becomes real.

This is why the lawsuit is a distraction, even if it matters.

The courtroom asks who controls OpenAI’s mission.

The market asks whether OpenAI can justify its valuation. The Financial Times reported that some investors have questioned OpenAI’s $852bn valuation as the company shifts strategy and faces pressure from Anthropic and Google.21

The operating question is more concrete: can OpenAI secure enough compute, at the right cost, and turn it into work customers will pay for every day?

That is where Anthropic matters: Anthropic’s strength is not only that Claude is good. It is that Claude has become useful in high-value workflows, especially coding and enterprise knowledge work. That type of usage is easier to monetise than casual chatbot traffic because it sits closer to budgets, teams, and measurable output.

The Wall Street Journal reported that Anthropic is launching a $1.5bn joint venture with Wall Street firms that aim to sell artificial-intelligence tools to companies, including those backed by private-equity firms. — a clear push deeper into enterprise deployment.22

That is where Google matters: Google can place Gemini into existing surfaces: Search, Android, Workspace, Cloud. It also owns large parts of the infrastructure stack. In Q1, Google said Gemini Enterprise paid monthly active users grew 40% quarter over quarter.23

That is where xAI matters: Elon Musk understands distribution, capital markets, manufacturing pressure, and infrastructure theatre better than most technology founders. Bloomberg reported that SpaceX has an agreement giving it the right to acquire Cursor for $60bn later this year, or pay $10bn for joint work, as Musk pushes into AI coding tools.24

DeepSeek pressures the cost of intelligence from below.

Anthropic pressures OpenAI in enterprise workflows.

Google pressures it through distribution and infrastructure.

xAI pressures it through speed, capital, and coding ambition.

The power grid pressures everyone.

OpenAI sits in the middle of all of it.

Our view: The company is still central to the AI market. But the easy phase of the story is over.

The next test is whether OpenAI can industrialise intelligence.

Industrialising intelligence means building the physical, financial, and operational system behind the software. It means buying compute before revenue fully proves it. It means betting on demand curves that may be real but uneven. It means managing a product race while also managing infrastructure risk.

It is a new kind of industrial build-out.

🎚️🎚️🎚️🎚️ Producer’s Note

YUNGBLUD fits this one.

OpenAI and Anthropic are still the young blood: barely a few years old, moving fast, loud, imperfect, and already carrying too much expectation.

That is the strange part of this AI moment. These companies still feel like startups, but the systems around them now look industrial: courts, governments, data centres, power contracts, and capital markets.

Young blood with transformer shortages. Less romantic. More expensive.

Share with your yung friends.

Fab 🌓

LinkedIn | Insta | X | Studio⍺

StudioAlpha Capital is a Delaware-structured pre-seed venture fund backing AI-native B2B software startups at day zero. Legal counsel: Cooley LLP. Fund administration: AngelList.

Sources

source:

https://www.reuters.com/business/openai-falls-short-revenue-user-targets-it-races-toward-ipo-wsj-reports-2026-04-28/

https://www.wsj.com/tech/ai/the-lore-of-sam-altman-is-being-tested-like-never-before-968227ea?st=78SrNq&reflink=article_copyURL_share

https://www.anthropic.com/engineering/april-23-postmortem?utm_source=chatgpt.com

https://www.wsj.com/tech/ai/white-house-opposes-anthropics-plan-to-expand-access-to-mythos-model-dc281ab5?utm_source=chatgpt.com

https://openai.com/index/building-the-compute-infrastructure-for-the-intelligence-age/?utm_source=chatgpt.com

https://www.anthropic.com/news/google-broadcom-partnership-compute?utm_source=chatgpt.com

Source: https://ig.ft.com/ai-power/