The AI App Land Grab

Why the battle has moved from frontier models to workflows, and why the window for disruption is unusually wide open

A year ago, people talked as if frontier models might swallow the whole software industry. Bigger models. Better benchmarks. End of story.

That story is already breaking.

The frontier labs are not floating above the market like gods of intelligence. They are being pulled down into it. Toward coding. Toward enterprise. Toward consumer distribution. Toward applications. In other words: even the model companies are being forced to pick lanes.

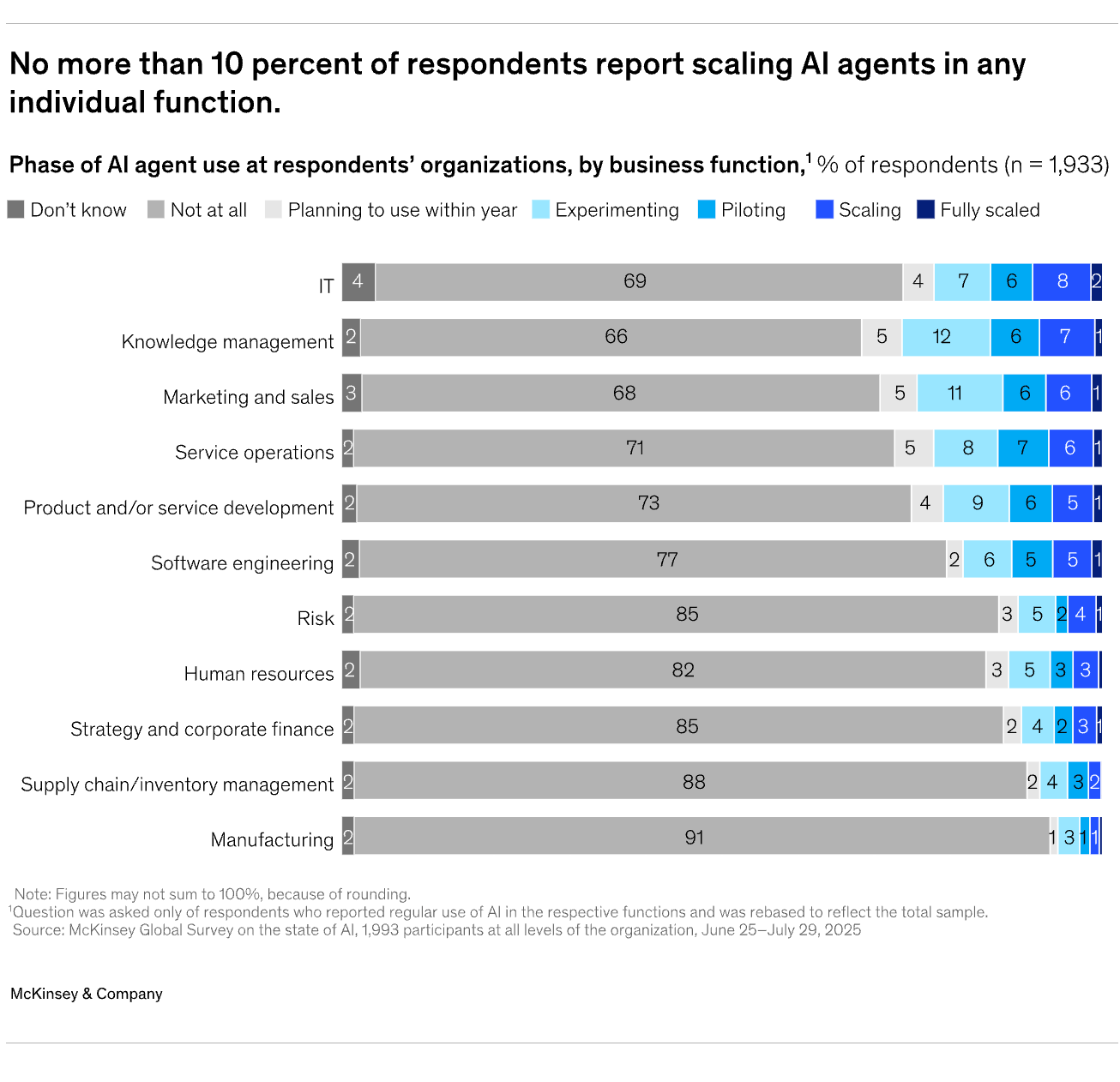

Meanwhile, most big companies are still moving like big companies move: cautiously, politically, and far too slowly. The tools are getting better by the quarter. The org charts are not.

That is why the application layer matters so much now. This is where old software categories get attacked. Where ugly workflows get rebuilt. Where startups can move faster than incumbents. And where buyers stop caring about abstract model IQ and start asking a much simpler question: does this thing actually solve work?

So whether you are a founder, an investor, or a CIO trying to bring AI into a real company, you should pay attention. The window is wide open. Entire software categories are up for grabs. Some companies will get rebuilt. Some will get buried. And most people still do not understand how early we are.

This post is an attempt to make that landscape clearer — using the latest thinking and data on where AI apps are actually winning, what makes them durable, and where the real opportunity sits.

1. AI Is the Next Layer, Not a Standalone Miracle

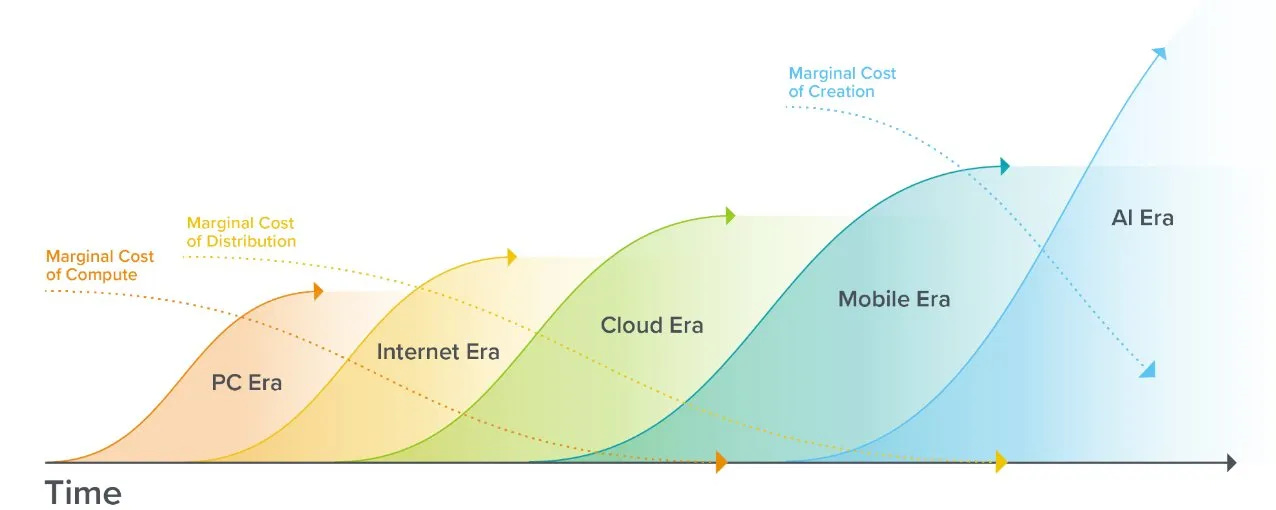

A useful part of the a16z framework is that it starts with a simple observation: big product cycles create big companies.1

First came the PC era. Then the internet. Then cloud. Then mobile. Each wave created an infrastructure layer and an application layer. Some companies built the rails. Others built the products people actually used every day. That pattern is not new.

What matters now is that AI is not arriving in an empty world. It is not some science project floating in a vacuum. It is landing on top of everything that came before: cloud infrastructure, smartphones, broadband, app stores, APIs, and billions of connected users. That changes the speed of adoption.

If the same model capabilities had appeared in the age of ENIAC, they would have been intellectually interesting and commercially irrelevant. Today they are plugged into a global software stack and a global user base. That is why this wave can move so much faster than earlier ones.

This is also why the usual “is AI real or just hype?” debate is already a bit behind the curve. The more useful question is no longer whether AI matters. It clearly does. The more useful question is where the value will actually accrue.

That is where the a16z discussion becomes more interesting. Because once you move beyond the grand platform-shift narrative, you hit the harder problem: not every AI product becomes a company, not every company becomes durable, and not every market rewards the same kind of player.

So the real work starts one level lower. Not with “AI changes everything,” but with: where exactly can an AI app become a real business, and what makes it hard to replace?

2. The a16z Map: Three Business Plays and One Architectural Shift

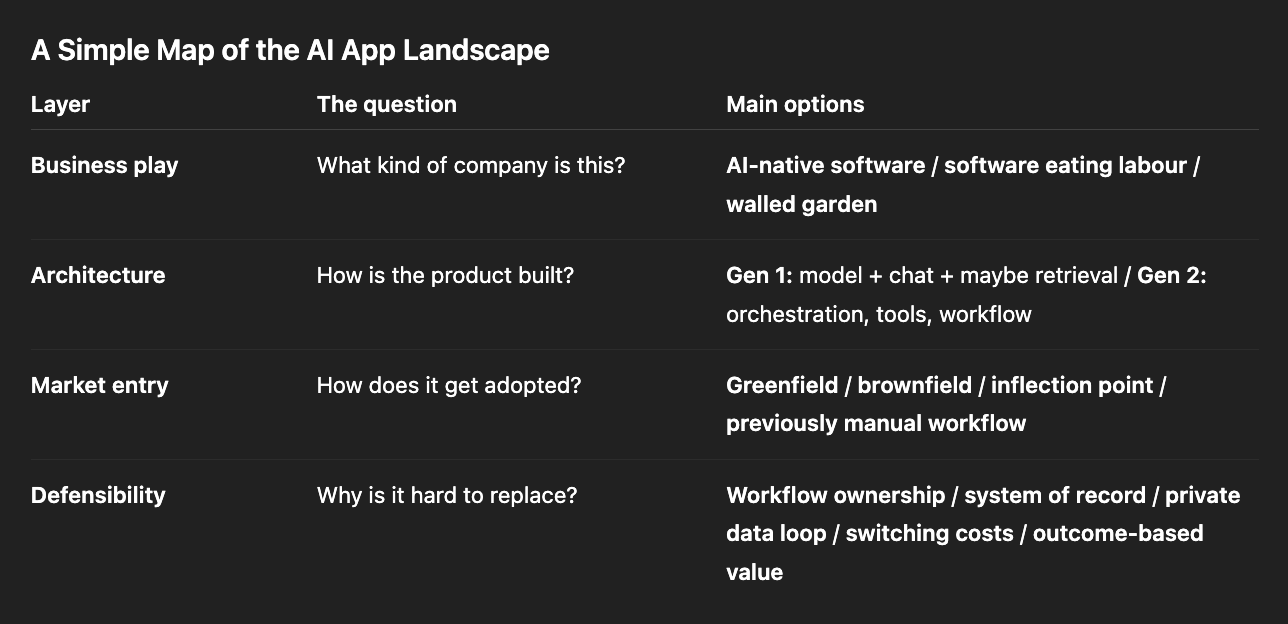

Strip away the case studies, the jokes, and the side comments, and the framework is basically a map of three main business opportunities in AI applications — plus one architectural shift that increasingly runs through all of them.

That is already useful, because too many people still talk about “AI apps” as if it were one single market. It is not. These are different strategic games with different economics and different moats.

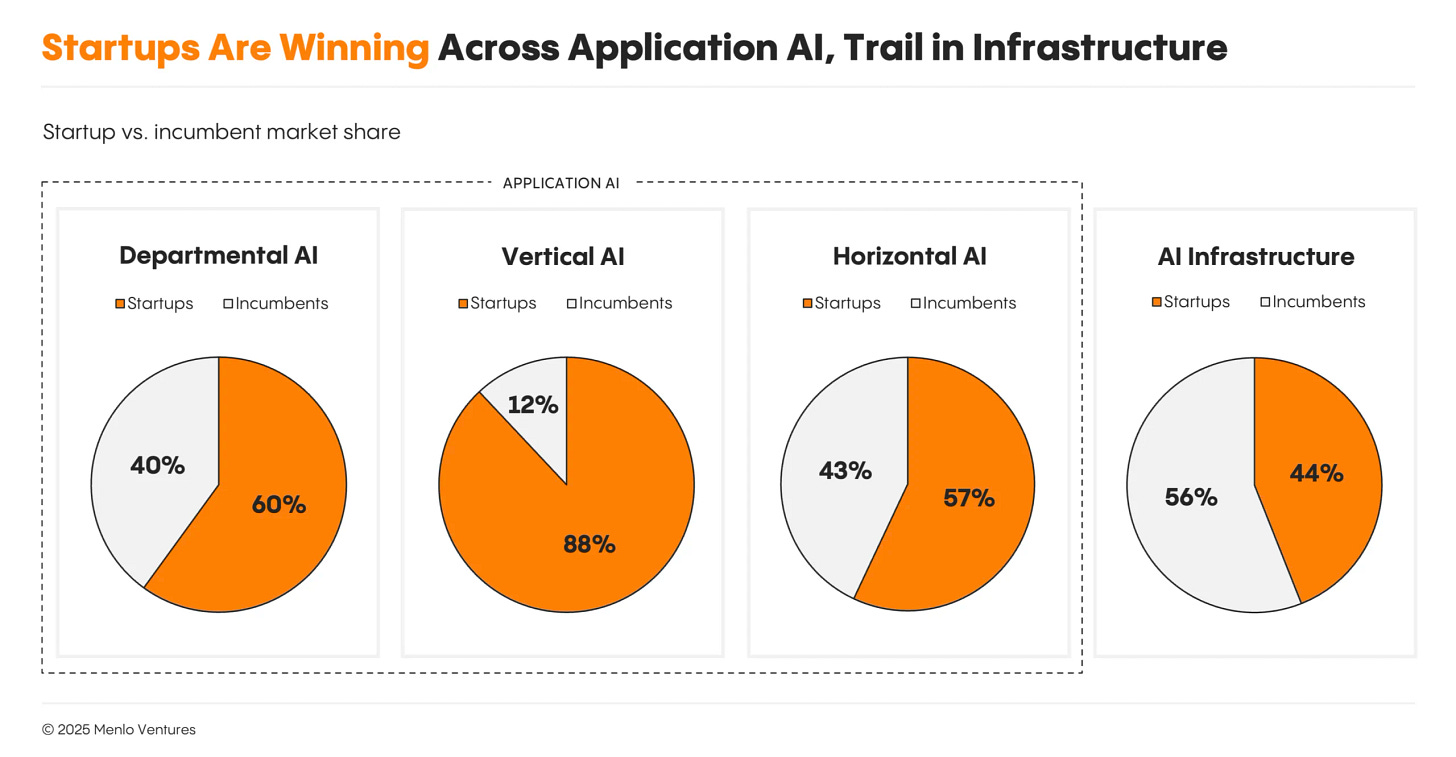

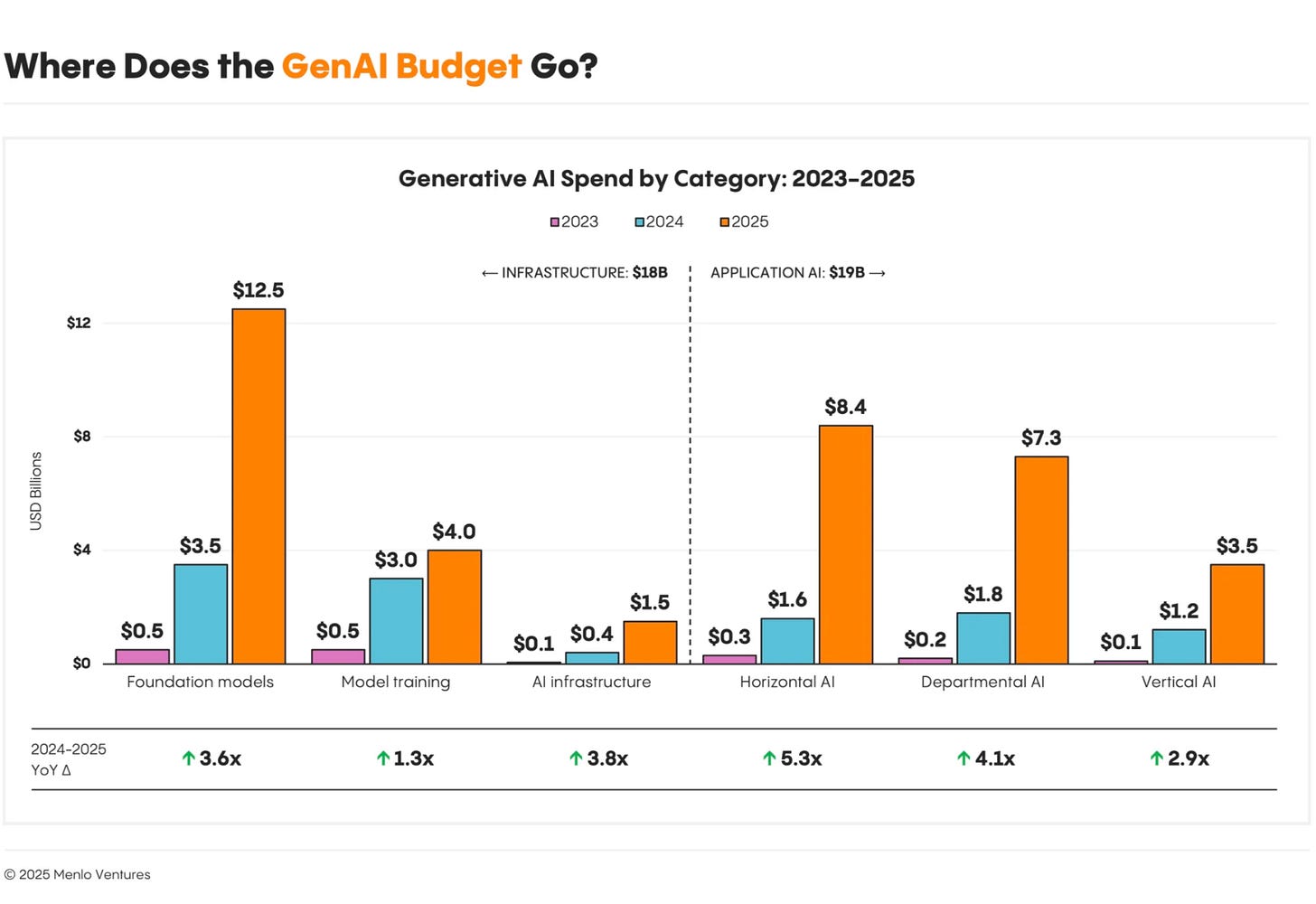

Menlo Ventures mentions that layer capture $19B in 2025 and segments applications in three categories:2

Departmental AI ($7.3 billion), built for specific job roles like software development or sales;

Vertical AI ($3.5 billion), targeting specific industries like healthcare or finance;

Horizontal AI ($8.4 billion), increasing productivity across all functions.

2.1 Traditional software is going AI-native

The first category is the easiest to understand. Existing software categories are being rebuilt with AI-native products.

Take any major software category: ERP, support, design, legal workflow, sales, finance, accounting, HR. In almost every case, a new founder can now look at the incumbent product and ask a dangerous question: if we were building this from scratch today, would we build it like this?

Usually the answer is no.

That creates room for new products that are faster, more automated, more outcome-driven, and less dependent on manual work by the user. In that sense, the a16z team is right: this looks similar to earlier platform transitions. In the cloud era, a new generation of companies was built because old on-premise software was not designed for the new world. The same logic now applies to AI-native software. LightFrame fits that pattern well: instead of adding AI to a legacy banking stack, it is rebuilding a classic core-software category from the ground up with a modern, API-first architecture.3

But here is the important correction: this does not mean the incumbents are dead.4

In fact, many incumbents may become stronger. They already own the customer relationship, the workflow, the data, the billing relationship, and often the system of record. They can add AI on top and charge more. And because they are already deeply embedded, customers may accept mediocre AI from an incumbent more readily than excellent AI from a startup they do not trust yet.

That is why the startup opportunity is often not “I will kill Salesforce because my interface is nicer.” That is rarely how this works in practice.

The better opening is usually one of three things:

a greenfield customer that has no stack yet

a customer at an inflection point where the old tool has clearly broken

a part of the market where the incumbent is badly designed, badly loved, or too slow to adapt

So yes, traditional software is going AI-native. But founders should be careful not to confuse a real platform transition with an easy displacement story. The transition is real. The displacement is much harder.

2.2 Software is starting to eat labour

This is the category the a16z team seems most excited about, and for good reason.5

Traditional software sells into software budgets. AI software can increasingly sell into labour budgets. That is a much bigger market.

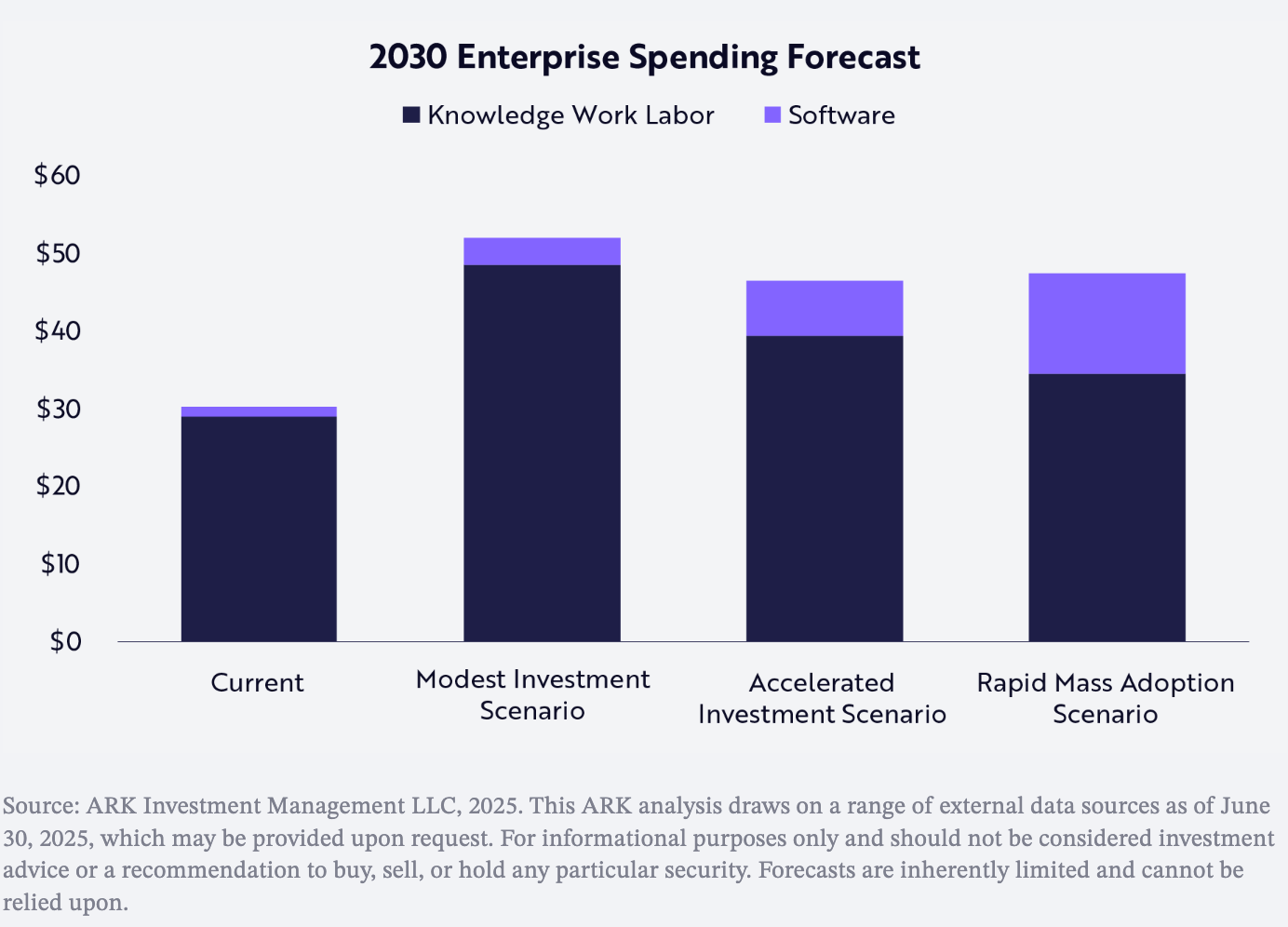

The software market is still small compared with the labour it could replace or augment. Global software and cloud spend is about $1.25 trillion today, versus roughly $30 trillion in knowledge-worker wages. ARK argues that AI will shift more of that labour spend into software. If that happens, software growth could accelerate sharply, with annual spending rising from $1.25 trillion today to around $7 trillion by 2030 at the midpoint of ARK’s range.6

This matters because many workflows were never worth automating in the old world. A task might have been too messy, too language-heavy, too context-dependent, too variable, or simply too expensive to build software around. You hired people instead. Not because it was elegant, but because there was no better option.

AI changes that equation.

Now a software product can handle part of a job, or most of a job, or the ugly overflow around a job like Klarna’s AI customer service: after-hours calls, first-line support, document handling, multilingual interaction, compliance scripting, collections workflows, evidence gathering, internal coordination, or repetitive financial admin. Cashflowy fits the same pattern from a different angle: instead of selling another finance dashboard, it is using AI to take over repetitive bookkeeping work that many solo operators still handle manually or through a traditional bookkeeper. Not perfectly in every case. But often well enough to create real economic value.That point is critical. In many cases the product does not have to replace a full human role to become valuable. It just has to do enough useful work at a cost and speed that changes the economics for the customer.

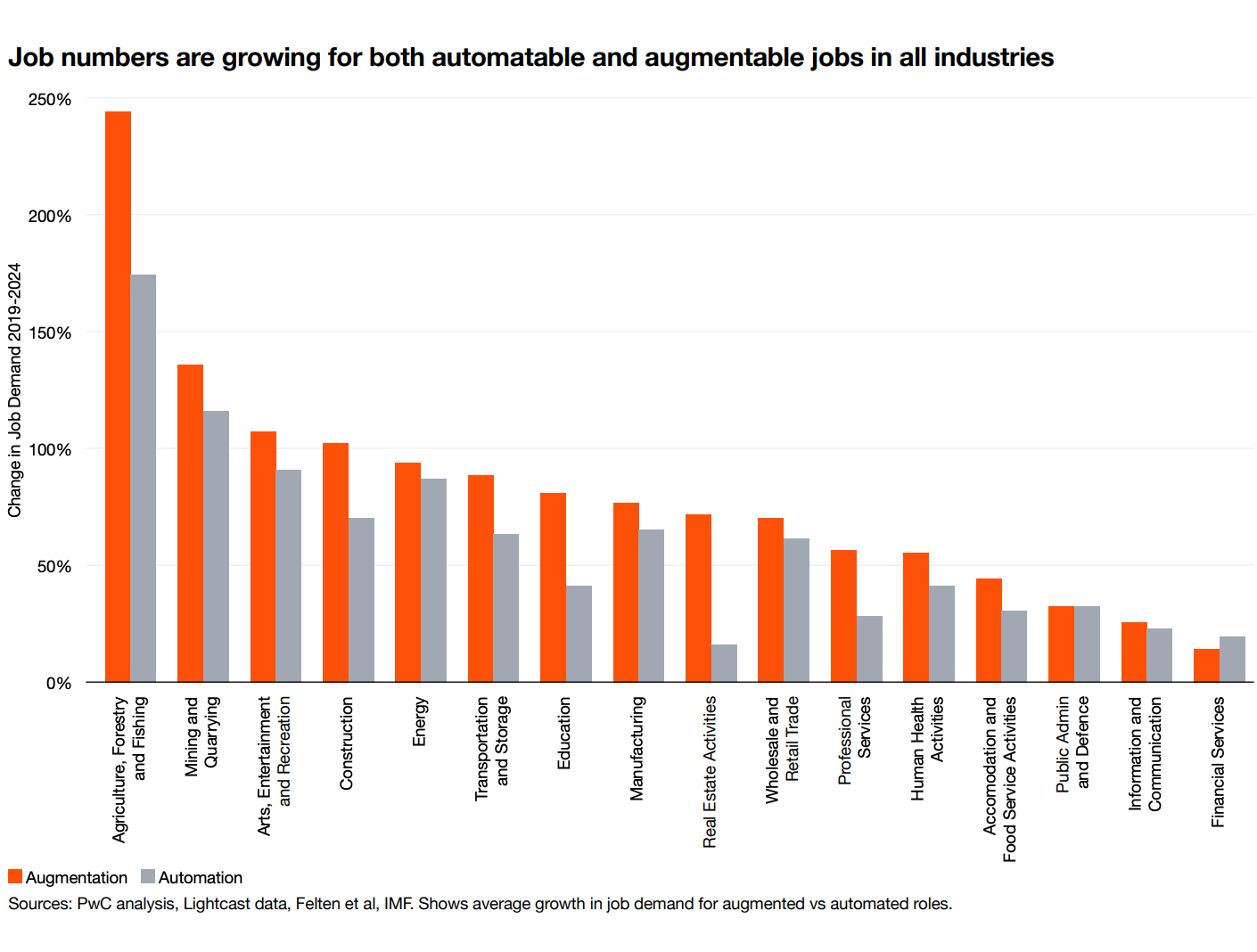

That is why “software eating labour” is a better framing than just “AI replacing jobs,” though even that needs nuance. In practice, a lot of these systems will first augment labour, cover labour shortages, or make low-value tasks economically feasible. The headline may sound dramatic. The actual mechanism is often more boring and more powerful: the value stays the same, but the cost of delivery falls sharply.

PwC’s latest data supports exactly that point: in practice, augmentation often matters as much as automation.7

That creates room for entirely new software categories. Not because nobody ever wanted the service before, but because nobody could deliver it at the right price.

This is also where founders can make a common mistake. They focus too much on the cost-saving pitch. Customers like saving money, yes. But the stronger pitch is often on the revenue side or the outcome side.

If your product helps a lender collect more, helps a legal practice take more cases, helps a sales team respond faster, or helps an operator do the same work with fewer delays and errors, the value is easier to see. Cost reduction is good. Revenue lift, speed, and operational reliability are usually better.

2.3 The “walled garden” is where data becomes product

The third category is the most subtle and, in some ways, the most powerful.

Some businesses are built around data that is technically public, practically inaccessible, operationally messy, expensive to structure, difficult to gather over time, or exclusively licensed. Before AI, those businesses could already exist. But often they were just data vendors. They sold access to the raw material.

AI changes the economics because it lets them do much more with that raw material.

The a16z metaphor here is useful: in the old world, you sold vegetables. In the new world, you can sell the finished meal.

That is the key shift.

If a company owns or controls a data source that others do not have in clean, usable form, AI allows that company to build a workflow, a recommendation engine, a legal memo, a medical answer engine, a procurement assistant, a fraud review system, or a decision tool directly on top of it. In other words, the value moves up the stack.

That matters because the moat is no longer “we have data.” Data alone is often overvalued in theory and under-monetised in practice. The stronger position is: we have data that others cannot easily assemble, and we can now turn it into a finished product the customer actually wants to pay for.

This is why the strongest examples in this category are not generic LLM wrappers. They are products where the user comes for an answer, a workflow, or a decision, but underneath that sits a data asset that the general model providers do not naturally have.

And that is also why this category is so interesting for startups and incumbents alike. Sometimes the winner is the new company that assembles a strange but valuable new data source. Sometimes the winner is an old data provider that finally realises it should stop selling cheap access and start selling expensive outcomes.

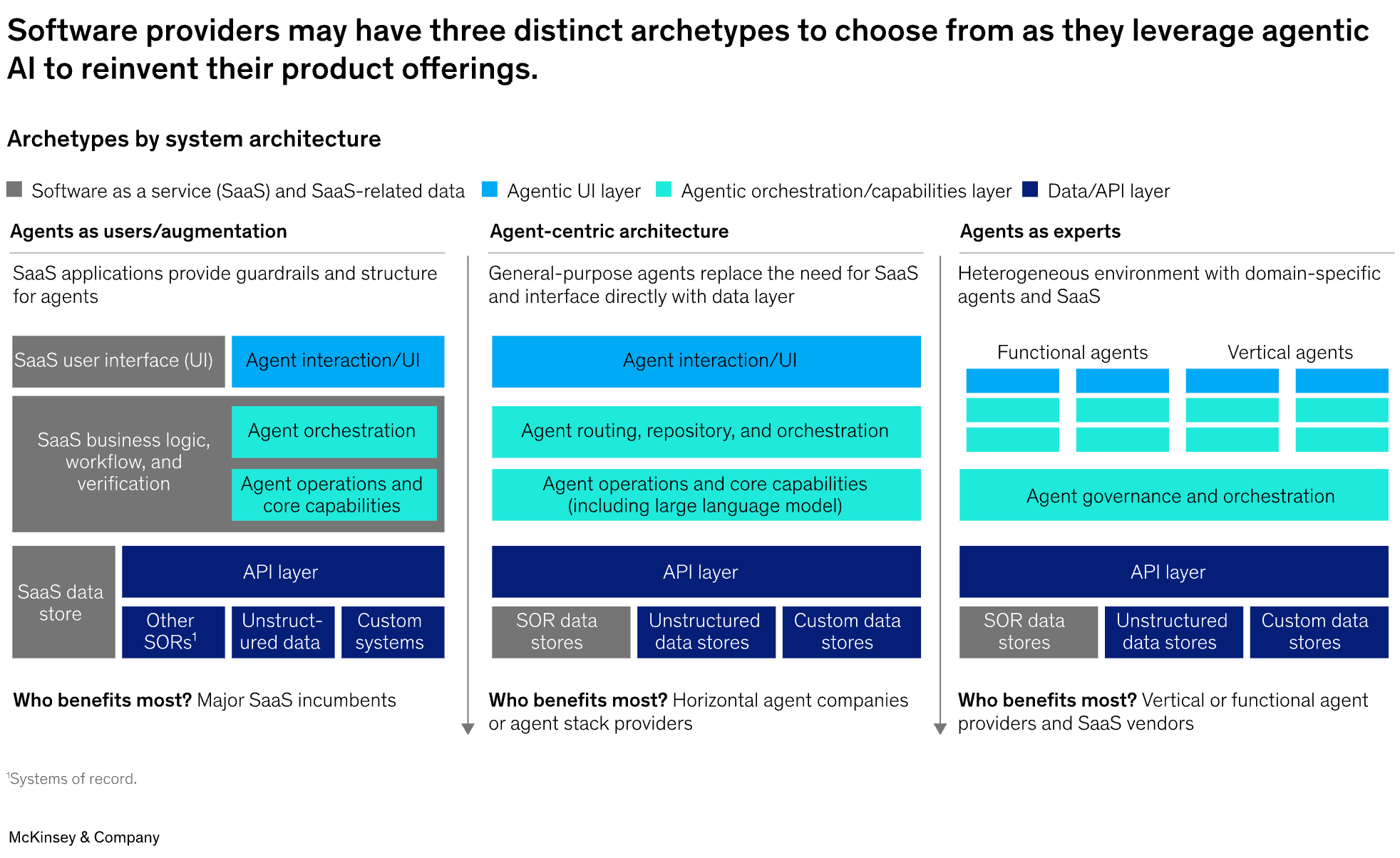

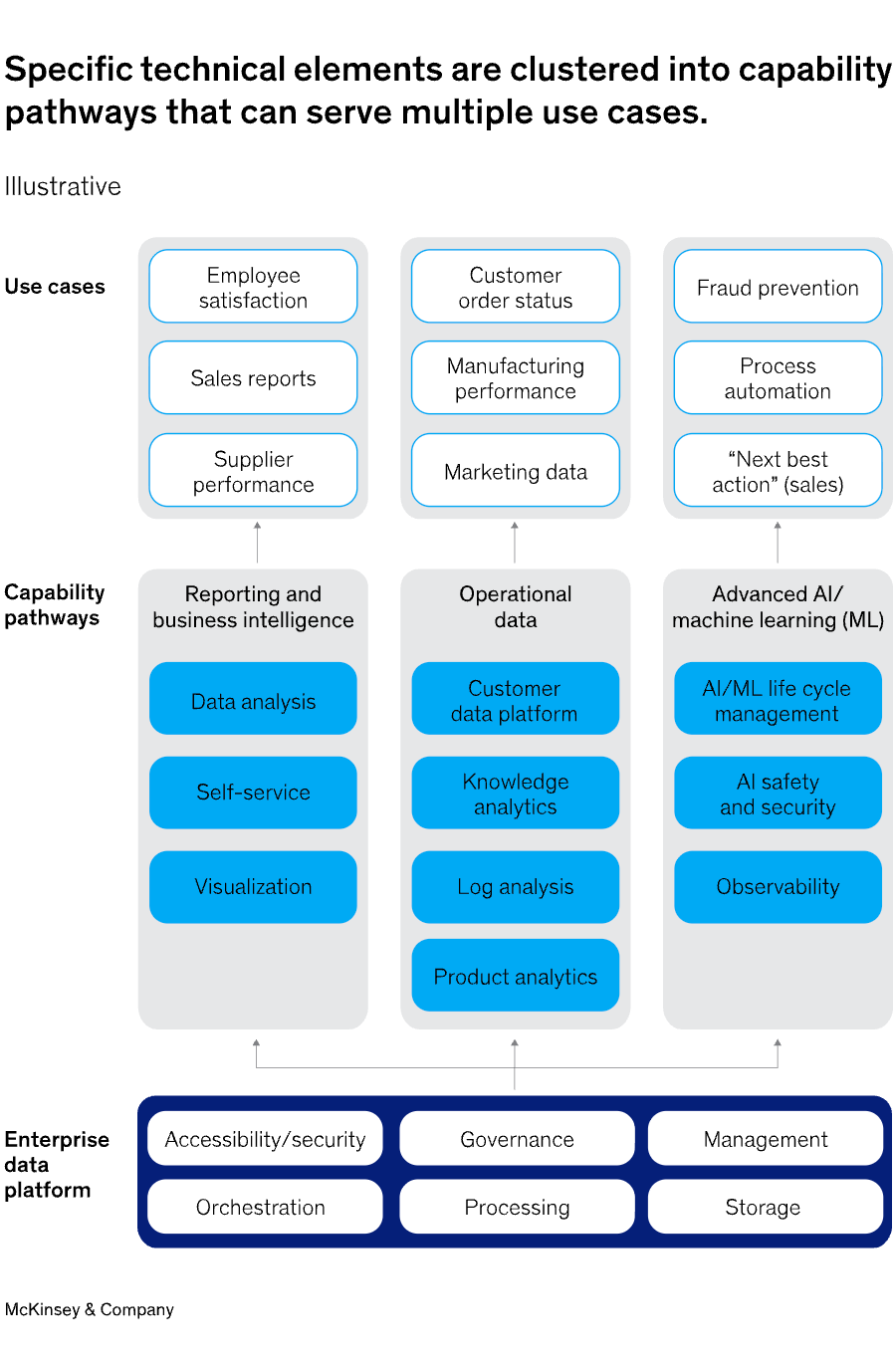

2.4 Orchestration is becoming the default architecture of serious AI-native products

There is one important refinement to the a16z framework.

In some categories, orchestration may become the product wedge. But in many AI-native startups, it is already becoming something else: baseline architecture.

That means the strongest products are no longer built around one model call and a chat box. Under the hood, they increasingly route between models, tools, retrieval systems, and execution steps. In some cases, that includes fallback logic, specialised models for different subtasks, and multiple systems working together in sequence.

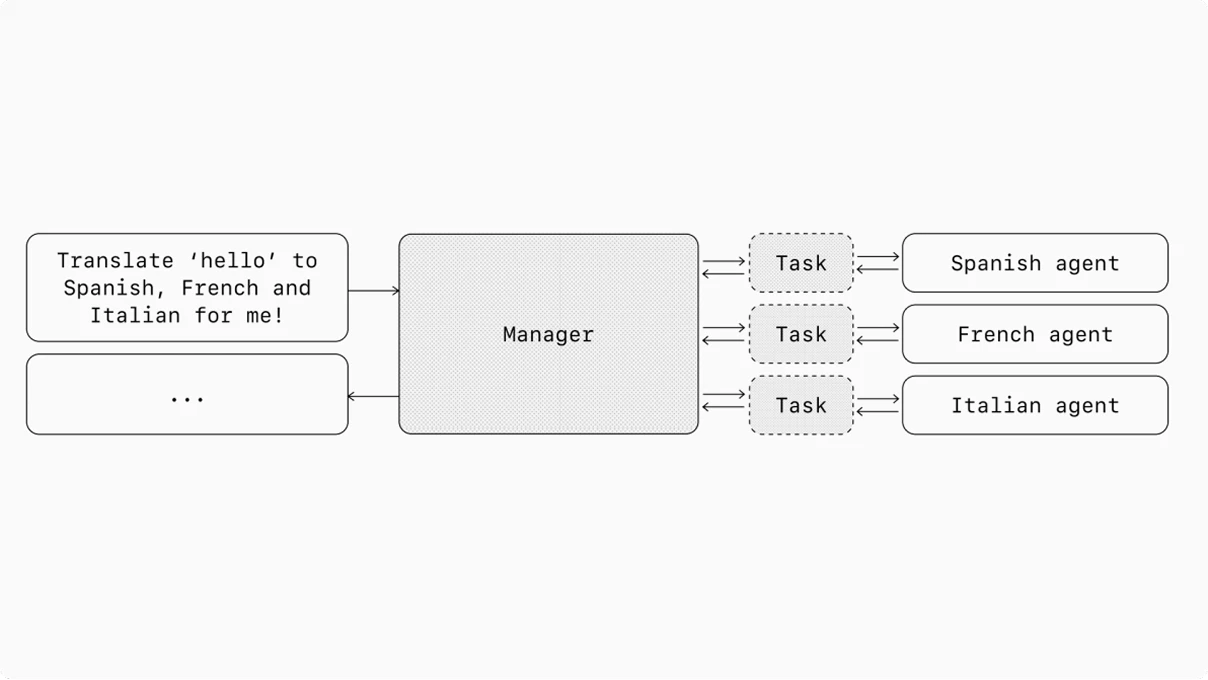

ChatGPT: In general, orchestration patterns fall into two categories:8

Single-agent systems, where a single model equipped with appropriate tools and instructions executes workflows in a loop.

Multi-agent systems, where workflow execution is distributed across multiple coordinated agents.

In coding or creative tooling, that orchestration layer may itself be part of the user-facing value. The customer may explicitly want access to the best model for each task inside one product.

But in many vertical applications, that is not what the buyer is buying. The buyer does not care whether the product uses one model or five. The buyer cares whether the workflow works, whether the answer is reliable, whether permissions are respected, and whether the product fits into the real operating environment.

That is why orchestration should not always be treated as a fourth equal market bucket. More often, it is becoming the default plumbing of serious AI-native software. It runs through the other categories rather than sitting neatly beside them.

And that also helps explain what is changing in the market. The first wave of AI-native products often looked like model plus chat plus maybe retrieval. The second wave is being judged on something harder: can the product carry real work across multiple steps, use tools safely, respect permissions and governance, and fit cleanly into the customer’s actual workflow? That is not just a better demo. It is a different product standard.

Put simply: Gen 1 products impressed people. Gen 2 products have to carry real work. And once a product has to carry real work inside a live enterprise workflow, architecture stops being background detail and becomes part of the product itself.9

The second wave is not mainly about better prompting. It is about better architecture.

The hard bits are not glamorous, but this is where enterprise reality begins:10

Identity — who is the user?

Authentication — are they really that user?

Authorization — what are they allowed to do?

Document-level permissions — which files may they actually see?

Data governance — how is data handled, stored, and controlled?

Auditability — can you show what happened, when, and why?

Observability — can you trace errors and debug the system?

Safe tool execution — can the agent act without causing damage?

Reliable state management — can the system keep context and progress across steps?

This is also why reverse-engineering Gen 2 capabilities into a Gen 1 product can get ugly.

Most AI startups can explain the first line. The serious ones can explain all four.

3. Differentiation Is Easy. Defensibility Is Hard

This may be the most important part of the whole framework.

AI makes it easier to build something impressive. It does not automatically make it easier to build something durable.

That distinction gets lost all the time.

A voice interface is differentiation.

A summarisation feature is differentiation.

An AI drafting tool is differentiation.

A fast demo is differentiation.

A smooth wrapper around a frontier model is differentiation.

None of that is necessarily defensible.

The hard part begins one level deeper:

A company becomes more defensible when it owns a workflow end to end.

More defensible when it becomes the system of record.

More defensible again when usage generates private operational data that improves the product over time.

And stronger still when the product is tied directly to business outcomes the customer cares about.

That progression matters because the surface layer is easier to copy than ever.

If a product’s magic lives mostly in the interface, the prompt design, or the basic model capability, the clock is already ticking. A better-funded startup can copy it. A model lab can absorb it. An incumbent can add a rough version of it into an existing product bundle and take the oxygen out of the room.

That is why the a16z team keeps returning to workflow ownership and system-of-record control. They are basically saying: the feature may get attention, but the workflow is what keeps the customer, and the system of record is what makes switching painful.

That is where the better examples from the podcast help.

Take legal AI. A product is not interesting simply because it drafts legal documents. Drafting is becoming table stakes. It becomes more interesting when it owns the whole workflow from intake to case handling to output, and when it learns from private outcome data that is not sitting in a public training set somewhere. That is where a company like Iqidis fits the more interesting pattern. Irys, its unified platform for legal work, is not meant to be a thin legal copilot, but a system designed to sit deeper in the workflow and become part of the operating infrastructure. At that point, the product is no longer just “AI for lawyers.” It starts to become operational infrastructure.

The same logic applies in collections or customer operations. A product is not defensible because it speaks with a human-like voice. That is a nice demo. It becomes defensible when it knows what to say, in which jurisdiction, in which sequence, with which compliance constraints, and with which expected outcome based on prior interactions across a large volume of real operational activity.

That is not a prompt trick. That is product depth.

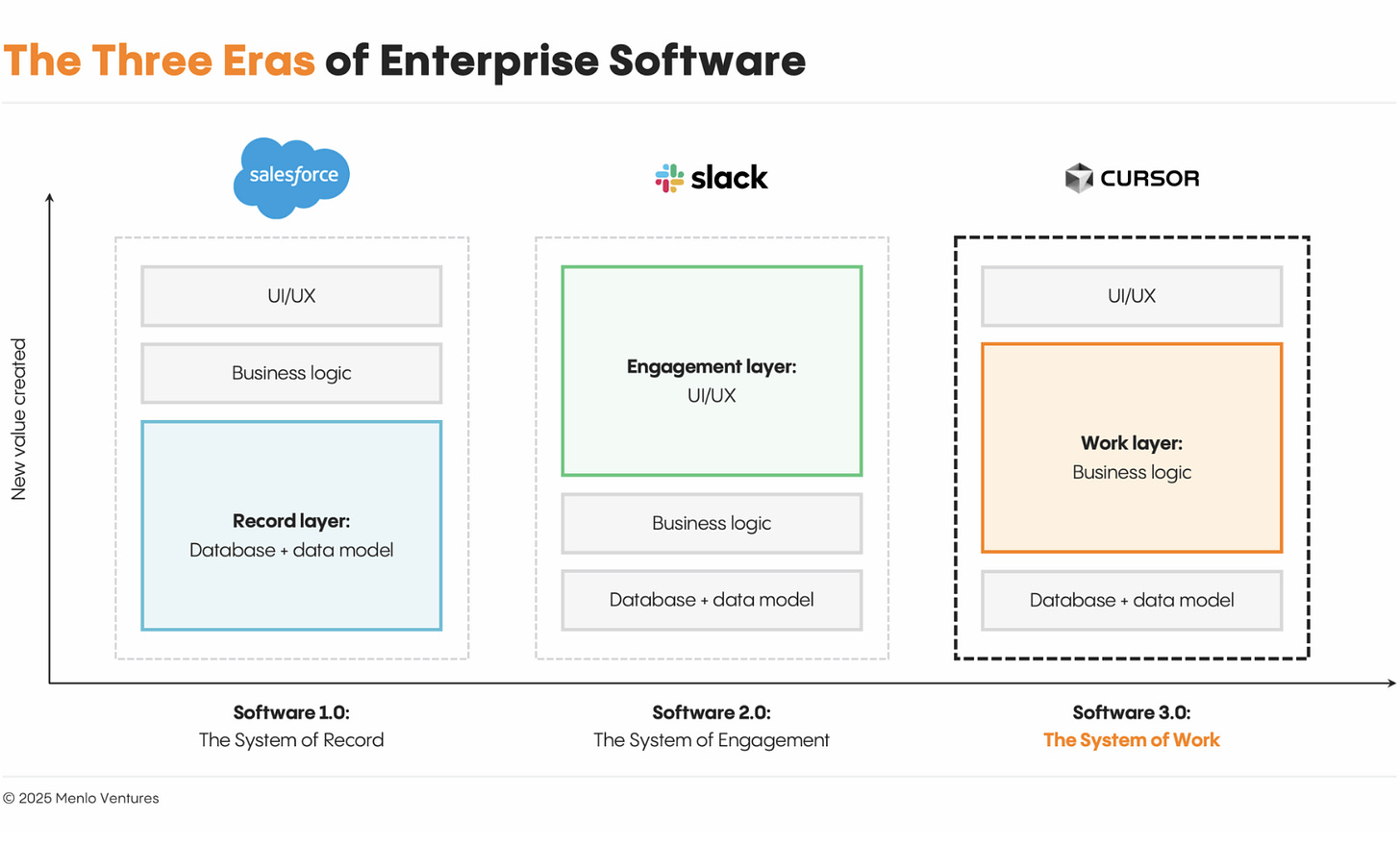

Menlo shows the shift clearly: in AI, value is moving away from the interface and toward the hidden logic that carries the work.11

And this is where many AI companies will run into trouble. They will confuse visible intelligence with durable advantage. The two are not the same.

In fact, in AI, the easier it becomes to produce visible intelligence, the more important the hidden layers become:

workflow ownership

embeddedness

operational memory

proprietary data

switching pain

pricing power tied to outcomes

So the test gets harsher, not softer.

A feature can impress a buyer.

A workflow can keep one.

A system of record can trap one.

A private data loop can compound that advantage.

That, much more than the model itself, is where durable application companies are likely to be built.

4. Greenfield Beats Brownfield More Often Than Founders Want to Admit

One of the most useful distinctions in the a16z framework is the difference between greenfield and brownfield. Greenfield means the customer is starting fresh. Brownfield means the customer already has a system, a workflow, an incumbent vendor, and a lot of embedded habits. In AI, that distinction matters more than many founders want to hear. As AI workflows become more complex and more agentic, switching costs rise.12

Founders often assume that if their product is clearly more intelligent, more automated, or easier to use, buyers will naturally switch. But embedded software survives for reasons that have little to do with love. Systems of record endure because they sit inside approvals, reporting, permissions, compliance, document structures, billing logic, and years of trained behaviour. That is why legacy systems have historically held some of the strongest moats in software.13

At the same time, brownfield is not hopeless. The market is not standing still. Enterprise buyers are increasingly open to AI-native vendors because they often innovate faster and deliver better outcomes than incumbents trying to retrofit AI into old products. That gap showed up especially clearly in coding, where CIOs already described a stark difference between first-generation and second-generation tools.14

The trick is that both things can be true at once. AI-native products can be better, and brownfield replacement can still be hard. That is why the best openings are usually more specific than “we will replace category X.”

First, the customer is new. A new company has no stack yet, no habits, no sunk costs, and no incumbent to protect. That is the cleanest greenfield opportunity.

Second, the customer hits an inflection point. A tool that was “good enough” at ten employees breaks at fifty. A local setup breaks across jurisdictions. A manual workflow breaks under scale. That moment is often more important than product quality alone, because the buyer is already forced to reconsider the system.

Third, the workflow never had proper software in the first place. In that case, the startup is not replacing software. It is replacing fragmented manual work, outsourced labour, spreadsheet glue, or operational chaos. That is often the most attractive setup of all.

Fourth, the product links directly to revenue, compliance, or another business outcome so important that the switching pain becomes worth it. If the upside is large enough, brownfield resistance weakens.

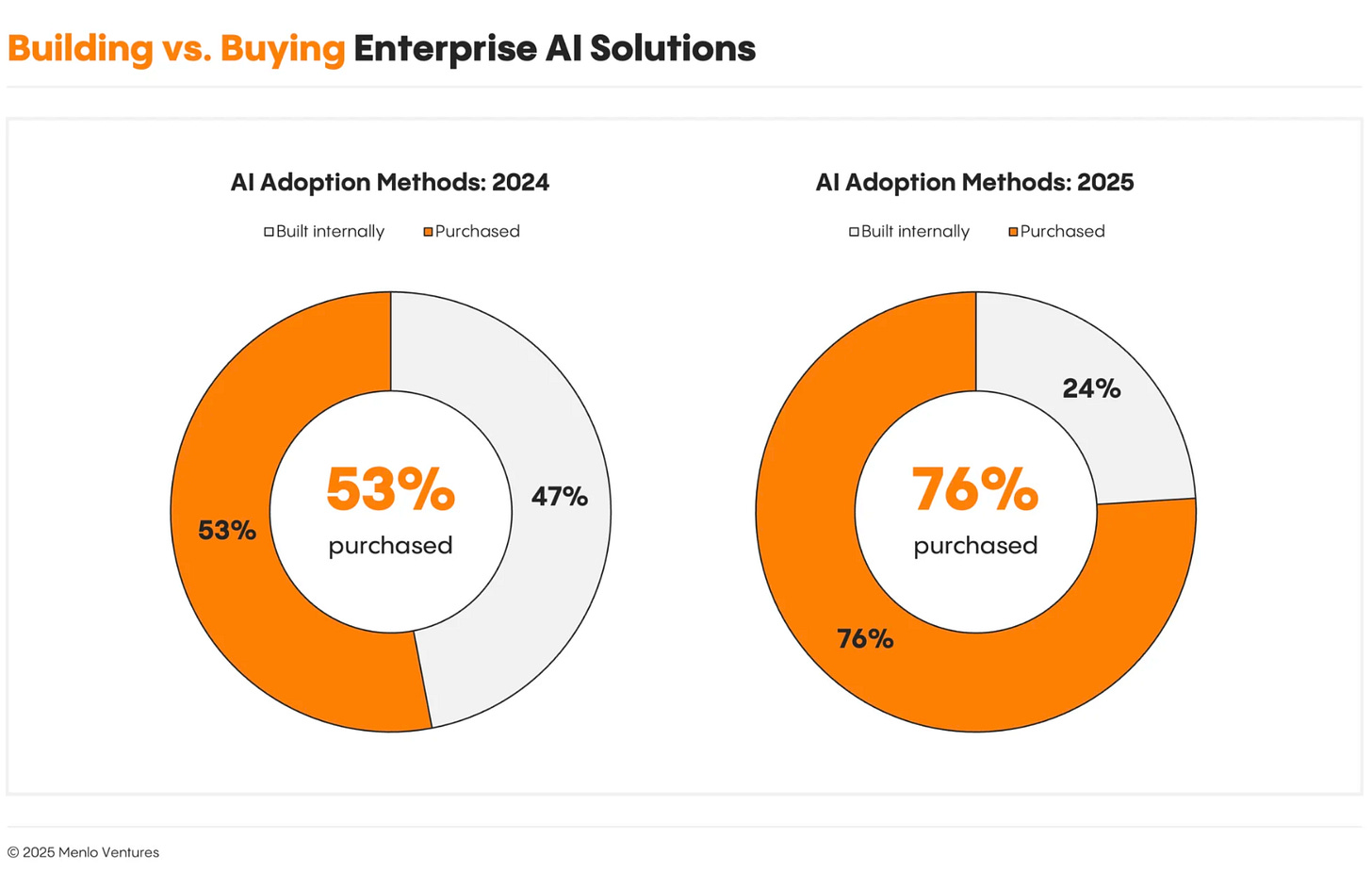

The broader pattern matches the latest enterprise data: the companies seeing the most value from AI are not just adding tools, they are redesigning workflows. And enterprises increasingly prefer buying AI applications rather than building everything themselves. That helps app-layer startups, but it does not magically remove migration pain inside mature systems.15

Menlo’s latest enterprise data shows the same thing from another angle: buyers are increasingly choosing ready-made AI products instead of building everything themselves.16

So yes, brownfield can work. But founders should stop romanticising it. Replacing an incumbent inside a live organisation is not just a product challenge. It is a migration challenge, a trust challenge, and often a political challenge. Greenfield beats brownfield more often than startup people like to admit — unless the buyer is already being forced to redesign the workflow anyway.17

5. The Model Is the Engine. The Company Is Built Elsewhere

The broad thesis is now hard to deny: AI has moved from spectacle to budget line. Enterprises spent heavily on generative AI in 2025, and a large share of that spend went to the application layer. At the same time, AI budgets have moved beyond pilots and innovation funds into recurring budget lines, and enterprises are increasingly buying off-the-shelf AI applications rather than treating the whole market as experimental.18

Menlo’s 2025 enterprise report puts numbers behind that shift: AI applications are now a real budget category, not just an experiment.19

But real spend does not automatically mean durable companies. Fast adoption can still be misleading when switching costs are low or novelty is doing too much of the work. Topline growth alone does not guarantee a healthy business, and some of the strongest companies will still take a slower path shaped by product complexity and competitive pressure.20

That is why the real value sits deeper than the model layer. Most organisations still have not embedded AI deeply enough into workflows and processes to create material enterprise-level benefits. The companies seeing the most value are redesigning workflows and treating AI as part of a broader operating change, not as a bolt-on feature.21

This is where the application-layer opportunity becomes more interesting — and more demanding. In the AI era, value moves away from the visible interface alone and toward the hidden logic that turns model capability into real business automation. The model may be the engine, but the company is built in workflow ownership, operating fit, permissions, memory, data, and the ability to carry actual work inside a real system.22

So the mistake is to treat the model as the product. It is not. The durable companies will be the ones that use the model to own a process, reduce real friction, fit into the customer’s operating environment, and get stronger as they accumulate workflow context, data, and trust. The flashy demo still matters. But the business is built elsewhere.23

🎚️🎚️🎚️🎚️ Producer’s Note

The takeaway for young founders is simple: stop chasing model hype and start owning real work.

This track sounds like the music I grew up with, which felt right for this piece. Because that is what this moment is: a transition you can feel before most people can explain it. While everyone argues about models, the real land grab is happening one layer up — in products that do real work, get embedded fast, and become hard to replace. If you are 22, 25, 28 and building now, this is not the moment to wait politely. Pick a painful workflow. Go deep. Build something people actually use. The lights may burn dimmer, but the window is wide open.

Spread the signal.

Fab 🚬

LinkedIn | Insta | Twitter | Studio⍺

Sources

“Moat matter more” by A16Z

https://menlovc.com/perspective/2025-the-state-of-generative-ai-in-the-enterprise/

https://www.mckinsey.com/industries/technology-media-and-telecommunications/our-insights/the-ai-centric-imperative-navigating-the-next-software-frontier

https://www.mckinsey.com/industries/technology-media-and-telecommunications/our-insights/the-ai-centric-imperative-navigating-the-next-software-frontier

https://www.ark-invest.com/articles/analyst-research/ai-will-determine-the-future-of-software-and-cloud-spending?utm_source=chatgpt.com

https://www.pwc.com/gx/en/issues/artificial-intelligence/job-barometer/2025/report.pdf

https://openai.com/business/guides-and-resources/a-practical-guide-to-building-ai-agents/

https://menlovc.com/perspective/ais-ui-problem-is-actually-a-new-era-of-software/

https://menlovc.com/perspective/2025-the-state-of-generative-ai-in-the-enterprise/

https://menlovc.com/perspective/2025-the-state-of-generative-ai-in-the-enterprise/